Embedded Vision everywhere?

Ask the Experts: Smarter Vision with Embedded Vision

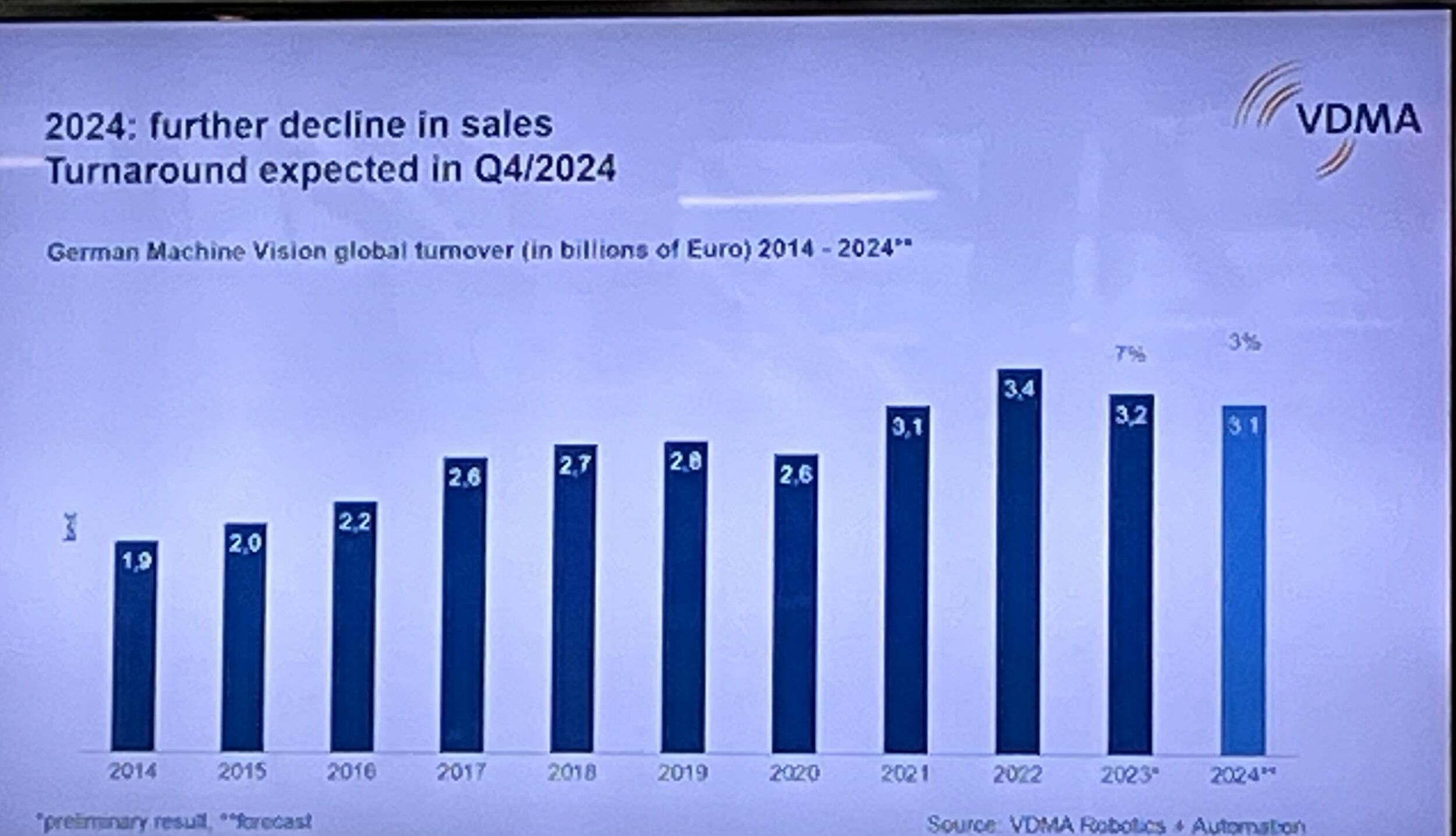

Everyone is talking about embedded vision. But what is embedded vision? A panel discussion at the last VISION fair – organisezed by the VDMA IBV – tried to answer this question and others, like will embedded vision make machine vision more straight forward, successful, or maybe cheaper?

What is Embedded Vision?

„If we get the same reliability we know from the basic vision things than embedded vision could be a good deal.“ – Andreas Behrens, Sick AG(Bild: Sick AG)

Arndt Bake (Basler): We define it based on the process, that people are using. So we differentiate between PC vision, with a PC/Intel architecture, and if you use another architecture – like ARM processors – we call it embedded vision.

Olaf Munkelt (MVTec): There are several important factors. One is the form factor, devices get much smaller. Number two is low power consumption. We think customers deserve the same power in terms of software on an embedded system than they can enjoy on a PC system.

Carsten Strampe (Imago): Our focus is to integrate all the interfaces needed for machine vision and all the processing power near and inside the machine. Therefore we support x86 platforms with Windows Embedded, but nowadays also ARM processors.

Maurice van der Aa (Advantech): Advantech’s point of view, as Global market leader for IPC’s, is the focus on x86 based architecture to support the Embedded Vision market. With x86 technology combined with other architectures, like FPGA and/or GPU, we cover a wide range of the Embedded Vision market.

Richard York (ARM): For us embedded vision is more of a transformation. Taking technologies from other markets, such as mobile or automotive, and bringing it into what is traditionally a world that is used to embedded PCs. Those technologies are typically in an order of magnitude lower by costs, they are an order of magnitude lower by power and there is a much greater ecosystem around them.

Andreas Behrens (Sick): Sick is a sensor company that knows how to deal with sensors in an embedded vision world. Make it smart and combine it with some clever ecosystem so that you can embed these sensors easily into your machine.

Why will Embedded Vision change machine vision?

„With the embedded vision cost point machine vision can break into markets, where the technology was previously far too expensive.“ – Arndt Bake, Basler AG (Bild: Basler AG)

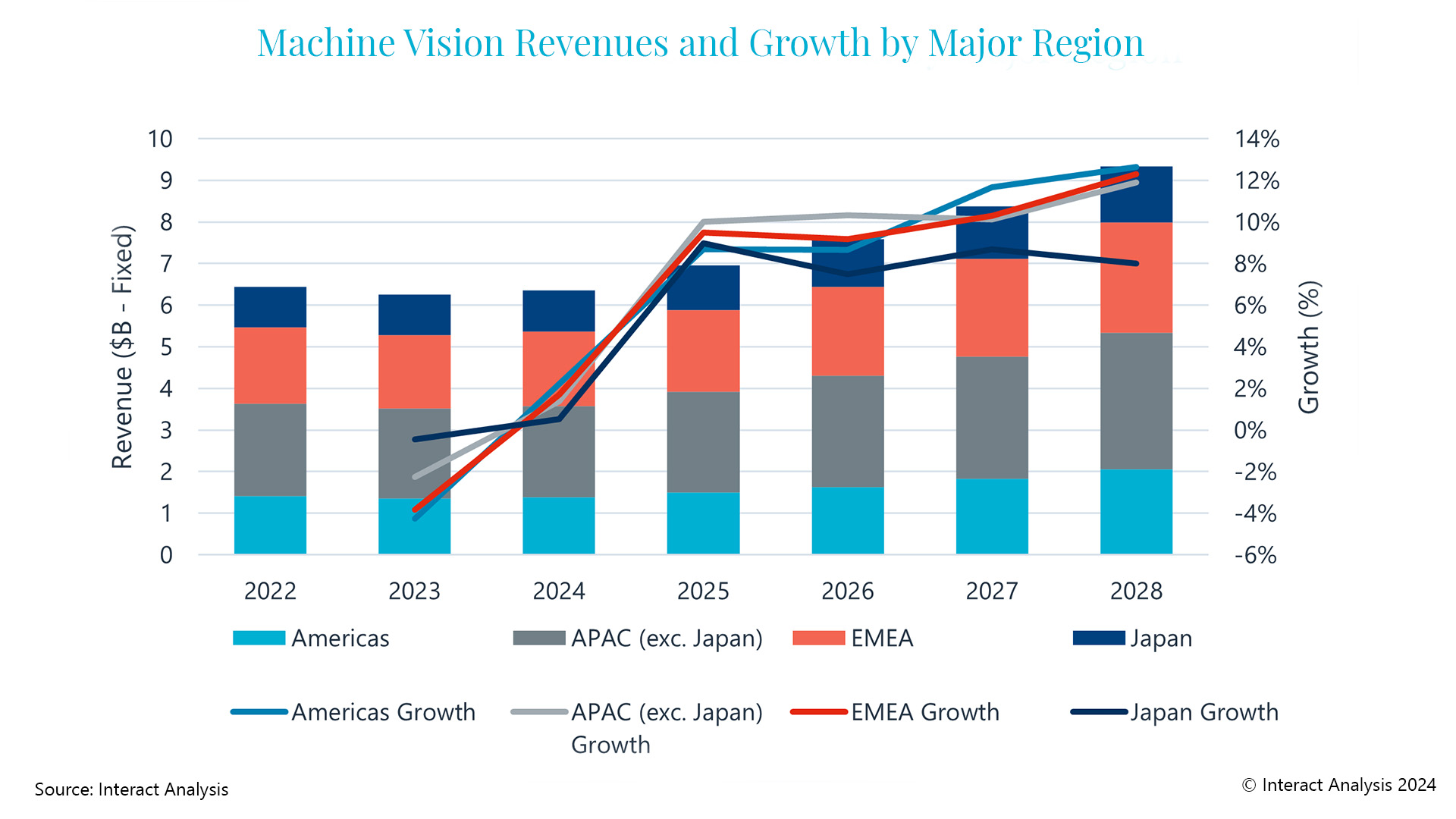

Bake: If you look at the hardware costs of a vision system today, there is quite a considerable part, people have to pay for the processing architecture. If you exchange the processing (Intel-based) architecture with an embedded architecture, you can reduce the costs quite dramatically. Therefore vision will not stay in the factory any more. With this cost point it can break into markets, where the technology was previously far too expensive.

Strampe: We see more powerful devices inside of the machine near the application, systems with lower power and more intelligent industrial interfaces.

Munkelt: First of all it is a transformation process, which we see right now. It opens up new opportunities, new markets and new applications. The question is, how far will the transformation process go? If I look around at this fair, I see many new line scan cameras, delivering 18khz lines and filling up the main memory within a millisecond. So there are certainly many applications we still need to address for standard PC-based solutions. The question is: Where will we be in five years regarding this ratio?

van der Aa: Technology will become cheaper. It is not only about the front end, because you can make the front end very cost-effective, but you still need to be able to process it and this will be done on the back end. I believe in a two-way structure: Cost-effective on the front side and a high performance system with more computing power on the back-end of the application.

York: I was with a company recently doing retail analytics, putting an embedded system into lots of locations around the retail side to understand and monitor what those people on that retail side are doing. That opens up tremendous opportunities way beyond what we see here for technologies. Think of how can we apply that technology to other markets, which are adjacent but substantially higher volume, and can than offer interesting services as well.

Behrens: What is about software and solving applications in an easy way? How to embed it into your machine? I think there is a need to transform it a little bit into a new technology. To make it easy to find ways that integrators can base on a reliable system or sensor. A sensor that you can buy everywhere, and get services. An ecosystem that you can start immediately to use that sensor which is already prepared with a firmware.