FPGA development platform

for computer vision

Build custom FPGA-accelerated

10GigE Vision Frame Grabbers

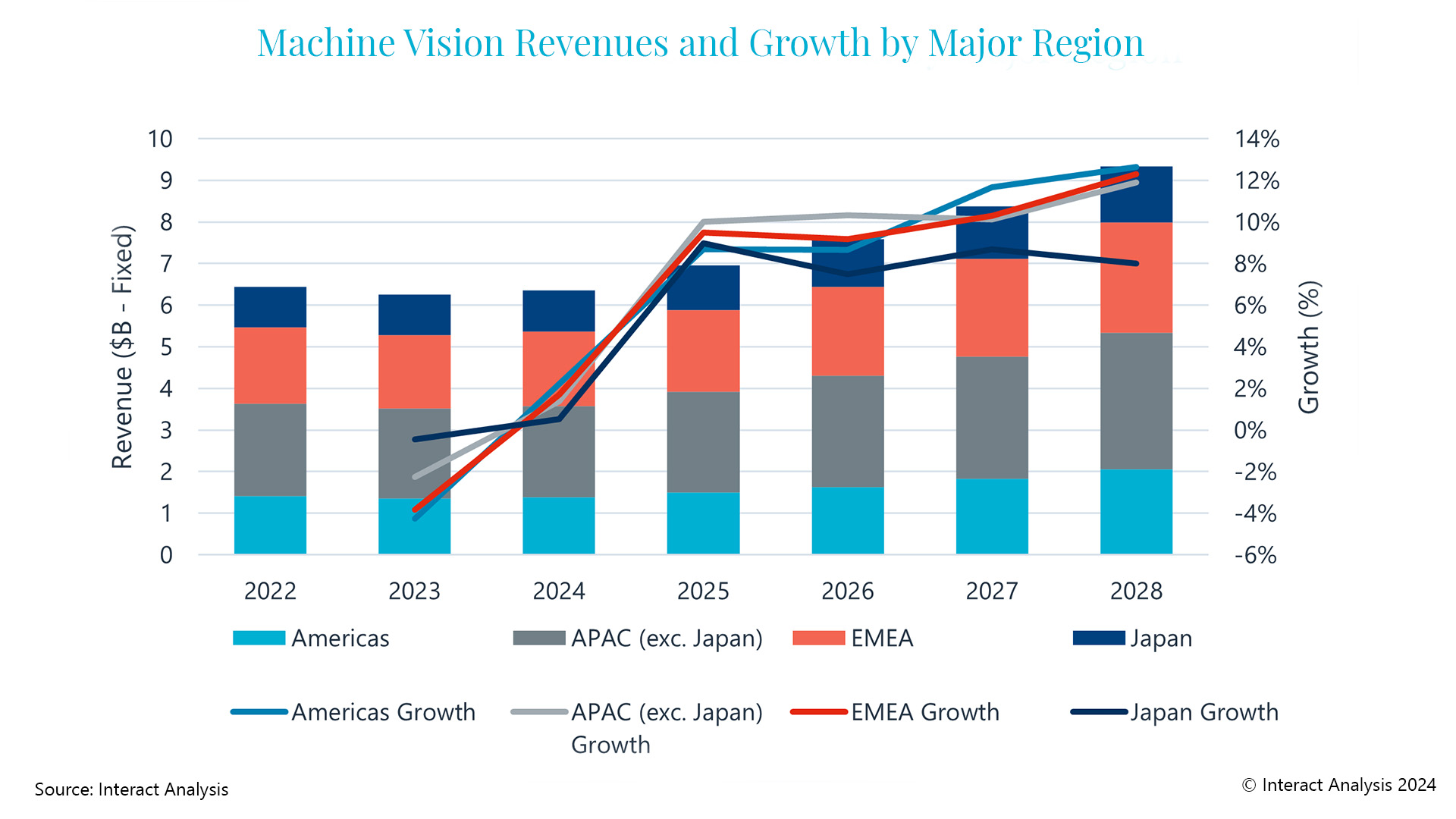

Version 2.0 of the GigE Vision standard enables video transport over 10GigE. As 10GigE Vision cameras become available, they are bundled with off-the-shelf 10G Network Interface Cards (NIC) serving as their frame grabber. This has the benefit of not requiring specialized frame-grabbing hardware but creates an I/O bottleneck between the NIC and the host CPU as NICs are unable to compress or pre-process the video stream in hardware. It gets worse when multiple 10G video channels are required.

Figure 1: A 10GigE Vision intelligent frame grabber is created by using the Software-defined FPGA development environment Quickplay. (Bild: PLDA Group)

The benefit of FPGA for handling Gigabytes of I/O throughput while increasing system performance and reducing system latency with on-the-fly processing is undeniable, but designing FPGAs traditionally requires specialized hardware skills and long design cycles. Quickplay is a software-defined development environment which enables developers with no FPGA skills to build FPGA-accelerated systems, including intelligent vision systems. To complement this development platform, QuickStore is an online marketplace where developers shop for IP that they can use seamlessly in QuickPlay to build their FPGA-accelerated applications. Assembling a 10GigE Vision frame grabber with embedded pre-processing is a straightforward process in the Quickplay IDE. As illustrated in Figure 1, the user drag &drops required processing blocks (IP) from the built-in library, from the catalog of QuickStore IP, or inserts his own processing kernels in C or Verilog/VHDL, and creates a dataflow representation of the FPGA design. This is accomplished graphically or in C++, as preferred. The dataflow modeling is based on a streaming architecture, perfect for real-time video processing. This specification of the hardware design is done at the highest level of abstraction, without reference to any hardware element such as clocks, resets, busses and wires, FIFOs and DMA engines, etc. In figure 1 example, a 10GigE Vision intelligent frame grabber is created by inferring one GigE Vision 2.0 controller IP and associated ports for data and control (including GVSP and GVCP), a 2:1 packet splitter, a JPEG 2000 compression IP and its memory buffer, and a Sobel edge detection filter kernel developed in C. Both the compressed video and Sobel-processed video are pushed out using two separate output ports. This is just one example of an intelligent frame grabber that performs in parallel on the 10G video stream JPEG 2000 compression as well as contour detection, however the ability to insert custom processing kernels in C or in Verilog/VHDL and IP from Quickstore provide endless possibilities. The second step in the development process is the validation of the dataflow model created in Quickplay, using Linux native gcc compiler and gdb debugger. Validation requires linking the design model to either a unitary test application or to the final application (i.e. the frame grabber application software), using the QuickPlay API. Figure 2 presents a subset of the API and illustrates the communication between software application and FPGA design. The third step is the Build stage where the software model of the frame grabber is compiled into a hardware (i.e. HDL) representation. This step requires the user to specify:

- • The target hardware platform for the design. Quickplay is a platform-aware environment that shields users from the complexity of hardware implementation by offering pre-integrated and pre-validated boards and platforms to choose from. It supports a variety of FPGA boards from 3rd-party vendors, some of which are suitable for computer vision acceleration. The example described requires a board with at least one 10Gb Ethernet (SFP+) connector for 10GigE video acquisition, one PCI Express connector for host interfacing, and one bank of onboard memory required by the JPEG 2000 compression IP.

- • The mapping and configuration of the design’s input port, output port, and memory buffer to the board’s physical interfaces and physical memory, as listed above; 10 Gigabit UDP/IP for the input port, PCI Express Gen3 for both output ports, and 4GB DDR3 SDRAM memory for the memory bank. The mapping is accomplished in a few clicks. Note that input and output ports could be mapped to any supported physical interface (e.g. Ethernet, SDI, USB, etc.), with no change to the application software, due to the agnostic nature of the communication API.

The Implement stage is the fourth and final step. Quickplay invokes the FPGA vendor’s tool suite completely in the background, until the generation of the FPGA bitstream which can be loaded onto the target board. Executing once again the software application used in step two now communicates with the FPGA hardware and produce the same output, albeit at a much faster pace. The user may at any time customize or completely re-architect the design, by replacing, modifying or adding processing elements, changing the physical I/O interfaces, and even selecting a different FPGA platform.

Conclusion

The ability to seamlessly integrate and interconnect IP from QuickStore, IP designed in-house whether in Verilog/VHDL or in C, and built-in elements from the Quickplay library, provides computer vision professionals an easy and unique way to build FPGA-augmented applications, be it intelligent frame grabbers or other smart video and image processing adapters, all without hardware or FPGA expertise.